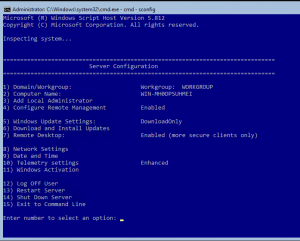

In a previous article we configured a single IIS webserver using powershell commands.

Now we want to add some additional servers that will run the same web-sites so that we can load balance incoming requests.

The first step is to create one or more additional web servers using the instructions in the previous article however in this case we don’t need to create folders, file shares or create any websites or application pools in IIS. We are going to sync all of that stuff from our master server using DFS Replication.

Installing DFS Replication on each web server

Install-WindowsFeature -Name FS-DFS-Replication -Confirm

Create WebFarmFiles Folder (if required) on each web server

New-Item -ItemType Directory -Path C:\WebFarmFiles

Install management tools on a Full GUI machine

Unfortunately the DFS management tools (even the powershell cmdlets) can only be installed on a Windows computer with a full GUI at this stage for some reason. Therefore, you will need to have another server (or workstation) that you can use to manage your DFS Replication Group. I am not sure why this is the case, but hopefully Microsoft fix it soon.

Install-WindowsFeature -Name RSAT-DFS-Mgmt-Con -ConfirmGet-DfsReplicationGroupthis cmdlet should run once the tools are installed.

Create a DFS Replication Group

As we have been forced onto a Full GUI machine, you could do the DFS setup using the DFS Management console. Since we are having so much fun though let’s keep going with setting everything up via Powershell.

Run the following command from the Full GUI management machine. Replace ‘YourGroup’ and ‘your.domain’ with the relevant values and feel free to modify the description to suit.

New-DfsReplicationGroup -GroupName YourGroup -DomainName your.domain -Description

"DFS Replication between YourGroup servers to sync content and configuration" -Confirm

Add Members to the DFSR Group

Run the following command for each ComputerName that needs to be added to YourGroup.

Add-DfsrMember -GroupName YourGroup -ComputerName WEB0X -Confirm

Add DFS Replicated Folder

New-DfsReplicatedFolder -GroupName YourGroup -FolderName WebFarmFiles -Confirm

Add Connections between web servers

The command below creates a bi-directional connection between the servers WEB01 and WEB02. You can configure various configurations with this command, for example you may like a ‘hub and spoke’ type setup where WEB02, WEB03 etc are all connected to WEB01 but not each other. You might also choose to connect each server with every other server in more of a ‘full mesh’ setup. ie (WEB01 <-> WEB02, WEB01 <-> WEB03 AND WEB02 <-> WEB03).

– Add-DfsrConnection -GroupName YourGroup -SourceComputerName WEB01 -DestinationComputerName WEB02 -Confirm

Configure the Primary server’s membership (and set the Staging Quota to 8GB)

Set-DfsrMembership -GroupName YourGroup -FolderName WebFarmFiles -ContentPath C:\WebFarmFiles -StagingPathQuotaInMB 8192 -ComputerName WEB01 -PrimaryMember $true -Confirm

Configure the other members

Set-DfsrMembership -GroupName YourGroup -FolderName WebFarmFiles -ContentPath C:\WebFarmFiles -StagingPathQuotaInMB 8192 -ComputerName WEB02, WEB03 -Confirm

Associated cmdlets to explore:

–Get-DfsrBacklog -GroupName YourGroup -SourceComputerName WEB0X -DestinationComputerName WEB0Y

More here: https://docs.microsoft.com/en-us/powershell/module/dfsr/set-dfsrconnection?view=win10-ps

–Get-EventLog -LogName 'DFS Replication' -Newest 20to check for DFSR errors

–Get-EventLog -LogName 'DFS Replication' -Newest 20 | Format-List messagefor full message details

–Restart-Service -Name DFSRthis may need to be run on all existing servers after adding a new member to the group

Exporting IIS Configuration for Sharing

On the initial web server that we set up in the last article, you should already have a website and application pool set up. Rather than creating all of those settings again, let’s export the configuration so that we can use it on all of our web servers. Placing this file in our DFS folder will ensure that all of the web servers stay in sync as configuration changes are made.

– New-Item -ItemType Directory -Path C:\WebFarmFiles\Configuration

– $KeyEncryptionPassword = ConvertTo-SecureString -AsPlainText -String "SecurePa$$w0rd" -Force

– Export-IISConfiguration -PhysicalPath "C:\WebFarmFiles\Configuration" -KeyEncryptionPassword $keyEncryptionPassword

You should now have 3 files in the C:\WebFarmFiles\Configuration folder.

Enabling IIS Shared Configuration

Now that our IIS configuration from the initial server has been exported. All of our web servers (including the initial server) need to be set to look at our Shared Configuration files. As DFS is handling the synchronisation of these files between our servers, we can simply point each one to the C:\WebFarmFiles\Configuration folder and they will all be able to read and write changes to the configuration. On each server, run:

– $KeyEncryptionPassword = ConvertTo-SecureString -AsPlainText -String "SecurePa$$w0rd" -Force

– Enable-IISSharedConfig -PhysicalPath "C:\WebFarmFiles\Configuration\" -KeyEncryptionPassword $KeyEncryptionPassword

And that’s pretty much it. Your web servers should now all be up and running with the same sites that you had configured on the initial server. You can test each one by updating your hosts file to point to the individual IP address of each server and testing in the browser one by one. And obviously the next step from here is to configure a load balancer like HAProxy or NGINX to direct traffic across all of the servers in a fair and reasonable fashion. Stay tuned for the next episode.